This article describes, from an infrastructural point of view, how to use together the doctrine sharding and

Amazon Aurora Autoscaling, on our databases.

Nuvola is our main project, which allows Italian schools to manage their activities in a computerized

way. Nuvola is a multi-tenant application. In the past we have described to you how we made sharding

with doctrine. This solution has allowed us to scale our databases horizontally, and it works very well.

The need to introduce Amazon Aurora Autoscaling is linked to cost optimization, one of the main pillars contained in

the AWS Well-Architected Framework: Cost Optimization.

Our application is mainly used in well-defined time slots, and workloads at these times are subject to

strong increases. Therefore we are forced to size the RDS instances with respect to the time slot with

higher load. The rest of the day, when we have the lowest load, the instances are oversized.

We have therefore chosen to switch to Amazon Aurora, which allows you to easily and quickly manage Master /

Slave replicas with the addition of Autoscaling for up to 15 replicas. This allows us to have smaller

instances, always on throughout the day, while when we have a greater load, Amazon Aurora adds instances

through Autoscaling.

We will describe in another article how we approached the Master / Slave application management on

symfony and doctrine.

What we are interested in knowing is the configuration present in the doctrine_conf.yml:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

doctrine: dbal: connections: default: logging: false profiling: false name_shard_1: charset: UTF8 dbname: name_shard_1 host: database-1.madisoft.it:database-1-ro.madisoft.it id: 3 password: '%database_password%' user: '%database_user%' |

The line that interests us is the one related to the host. All requests for writing, editing, deleting go to the

first host (the master database-1.madisoft.it), all readings such as the select go to the second host (the slave database-ro-1.madisoft.it).

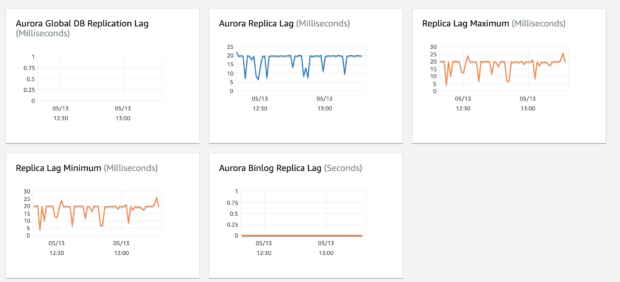

It should be taken into consideration that between master and slave there is a latency that Amazon indicates

in a value much lower than 100ms. In our observations this value is on average 20 / 30ms.

As per our custom, we have created the infrastructure using completely code. Specifically we have used

Ansible and Python.

In the past we have already described how to use Ansible to create an RDS infrastructure. In this guide we

will report only the differences from the previous guide.

To use Aurora you must first create a cluster:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

- name: INFRASTRUCTURE RDS | Create Cluster Aurora RDS command: "aws rds create-db-cluster --db-cluster-identifier {{ project }}-{{ rds_env }}-database-rds-{{ item }} --database-name {{ rds_database_name }} --engine {{ rds_database_engine }} --engine-version {{ rds_database_version }} --db-cluster-parameter-group-name {{ rds_database_parameter_group }} --{{ (rds_database_encrypt_storage == 'yes') | ternary('storage-encrypted','no-storage-encrypted') }} --master-username {{ mysql.root_username }} --master-user-password {{ mysql.root_password }} --vpc-security-group-ids {{ security_group.group_id }} --port {{ rds_database_port }} --db-subnet-group-name {{ project }}_{{ rds_env }}_rds_vpc_subnet --preferred-maintenance-window {{ rds_database_maint_window }} --backup-retention-period {{ rds_database_backup_retention }} --preferred-backup-window {{ rds_database_backup_window }} --deletion-protection --tags 'Key=Name,Value={{ project }}_{{ rds_env }}_database_{{ item }}' 'Key=billing,Value={{ billing_tag_value }}' \ 'Key=env,Value={{ project }}_{{ rds_env }}' 'Key=role,Value=database' 'Key=type,Value={{ type_tag_value }}'" register: rds_create_gathering with_sequence: start="{{ rds_database_count_start }}" end="{{ rds_database_count_end }}" when: rds_delete_all == "no" and rds_restore_instance == "no" |

We have not been able to use, for the moment, the Ansible module to create the cluster because it is not

complete, compared to our needs. We therefore decided to use the AWS command line API.

Once the cluster has been created, we can create a master instance.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

- name: INFRASTRUCTURE RDS | Create Instance RDS command: "aws rds create-db-instance --db-instance-identifier {{ project }}-{{ rds_env }}-database-rds-{{ item }} --db-instance-class {{ database_instance_type }} --db-cluster-identifier {{ project }}-{{ rds_env }}-database-rds-{{ item }} --{{ (rds_database_multi_zone == 'no') | ternary('no-multi-az','multi-az') }} --engine {{ rds_database_engine }} --db-parameter-group-name {{ rds_database_parameter_group }} --option-group-name {{ rds_database_option_group }} --{{ (rds_database_encrypt_storage == 'yes') | ternary('storage-encrypted','no-storage-encrypted') }} --db-subnet-group-name {{ project }}_{{ rds_env }}_rds_vpc_subnet --{{ (rds_database_publicly_accessible == 'yes') | ternary('publicly_accessible','no-publicly-accessible') }} --preferred-maintenance-window {{ rds_database_maint_window }} --{{ (rds_database_upgrade == 'yes') | ternary('auto-minor-version-upgrade','no-auto-minor-version-upgrade') }} --tags 'Key=Name,Value={{ project }}_{{ rds_env }}_database_{{ item }}' 'Key=billing,Value={{ billing_tag_value }}' \ 'Key=env,Value={{ project }}_{{ rds_env }}' 'Key=role,Value=database' 'Key=type,Value={{ type_tag_value }}'" register: rds_create_gathering with_sequence: start="{{ rds_database_count_start }}" end="{{ rds_database_count_end }}" when: rds_delete_all == "no" |

Aurora, when creating the cluster, automatically assigns 2 endpoints:

|

1 2 |

db_cluster_identifier.cluster-abcd1234.region.rds.amazonaws.com db_cluster_identifier.cluster-ro-abcd1234.region.rds.amazonaws.com |

The first represents the master instance (reading and writing)

The second represents the slave instance (read only)

When you have only one instance (then the master), the two endpoints point to the same server through

special CNAME.

When a slave is activated in the cluster, Aurora automatically moves the record pointing:

db_cluster_identifier.cluster-ro-abcd1234.region.rds.amazonaws.com to the new instance in read-only.

The same operation is performed by Amazon Aurora when the slave is turned off, but in the opposite way.

Once the Aurora cluster is activated with master and slave it is possible, if desired, to also activate the

autoscaling. Each slave that the autoscaling creates is also added to the readonly endpoint.

If your traffic is distributed throughout the day, you can activate the autoscaling and leave it always on. In

our case, having significant differences in traffic in the time slots of the day, or significant drops in load

during the night and holidays, we decided to rely on a lambda function for Autoscaling management.

There may be periods in which we do not want the active slave by default, and thanks to the lambda

function we can activate or deactivate the slave when we need it automatically.

The function can be invoked either through Cloudwatch rules (like a cron), or through an alarm,

Cloudwatch. Linked to a high CPU event or number of connections to the high database, which invokes SNS

(Simple Notification Service) which in turn invokes the Lambda function.

In this way we have the possibility to manage the use of the databases in a much more optimized way,

based on the periods of the year, the days of the week or the most critical time slots.

Below is an extract of the Lambda function code that creates the autoscaling scaling policy:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 |

conn_application = boto3.client('application-autoscaling', region_name=region) response = conn_application.put_scaling_policy( PolicyName=kwargs['name'], ServiceNamespace=kwargs['service_type'], ResourceId=kwargs['resource'], ScalableDimension=kwargs['scalable_dimension'], PolicyType=kwargs['policy_type'], TargetTrackingScalingPolicyConfiguration={ 'TargetValue': kwargs['target'], 'PredefinedMetricSpecification': { "PredefinedMetricType": kwargs['metric'] }, 'ScaleOutCooldown': kwargs['scale_out'], 'DisableScaleIn': kwargs['disable_scale_in'], 'ScaleInCooldown': kwargs['scale_in'] } ) |

Following the creation of the scalable targets:

|

1 2 3 4 5 6 7 |

response = conn_application.register_scalable_target( ServiceNamespace=kwargs['service_type'], ScalableDimension=kwargs['scalable_dimension'], ResourceId=kwargs['name'], MinCapacity=kwargs['min_capacity'], MaxCapacity=kwargs['max_capacity'] ) |

Scaling policy and scalable targets are necessary for Amazon Aurora Autoscaling to work.

Finally the creation of the slave, if you have decided to turn it off (in fact, to have the autoscaling working, it

is necessary to have at least one active slave):

|

1 2 3 4 5 6 7 |

conn = boto3.client('rds', region_name=region) response = conn.create_db_instance( Engine=engine, DBClusterIdentifier=name_cluster, DBInstanceIdentifier=name_instance, DBInstanceClass=instance_type ) |

With the addition of Amazon Aurora Autoscaling to our sharding system with doctrine, we are now able to manage

traffic to our databases in all ways. This has allowed us to rationalize costs and offer a better service.